The Verification Methodology of Data Backbone Reliability.

Modern intelligence systems succeed or fail based on the integrity of their substrate. DataTrionyx maintains a rigorous technical vetting framework to ensure every architectural solution is viable, scalable, and resilient.

Integrity Benchmarks

We do not deploy until three distinct layers of viability testing are satisfied. This structured approach removes ambiguity from large-scale data migrations and intelligence deployments.

Structural Audit

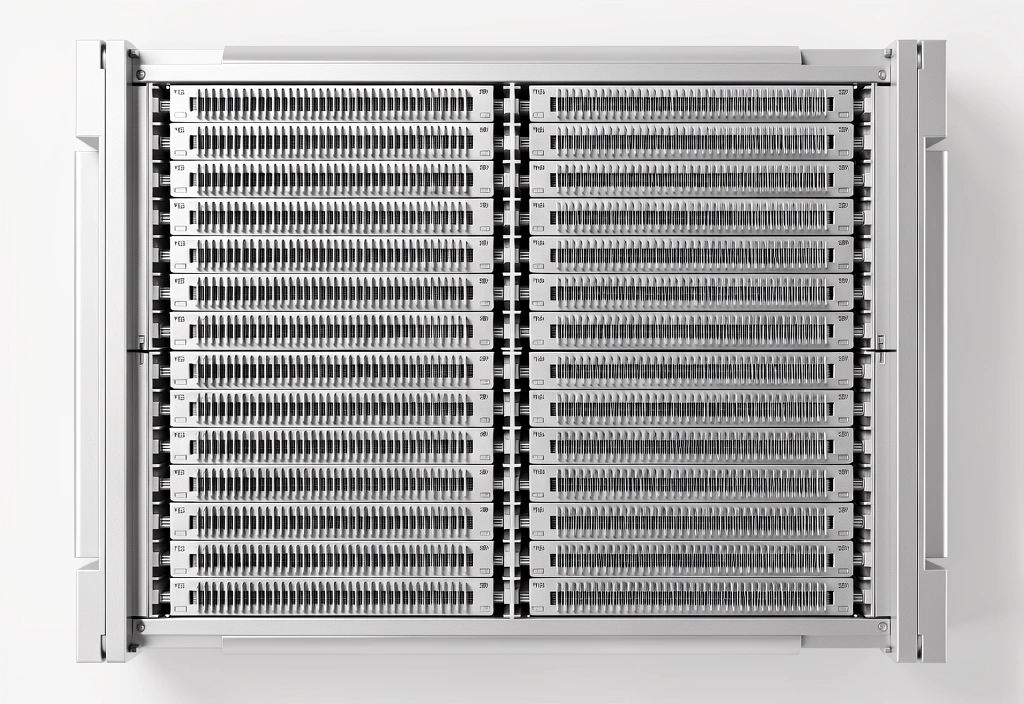

Every system proposal undergoes a rigorous audit of its base compute and storage logic. We assess the physical and virtual landscape to ensure the infrastructure can support high-load intelligence processing.

- Hardware Lifecycle Planning

- Latency Map Analysis

- Redundancy Factor Scoring

Security Hardening

Protection is woven into the architectural fabric, not added as a layer. Our standards require zero-trust perimeter logic for all mission-critical data pathways and intelligence assets.

- End-to-End Encryption Standards

- Identity & Access Controls

- Real-time Threat Monitoring

Scalability Proofing

We stress-test the environment against future growth projections. Infrastructure must remain performant as data volume increases by orders of magnitude over multi-year cycles.

- Elastic Compute Verification

- Database Horizontal Sharding

- Load Balancing Stress Tests

Architectural Standards for Modern Intelligence

Protocol Unification

We enforce strict communication protocols between disparate data sources to prevent intelligence gaps. This ensures a single source of truth across the entire enterprise stack.

Immutable Logging

Accountability is a core infrastructure requirement. Every action within our deployed environments is logged to an immutable ledger for audit and forensics.

Resource Optimization

Our vetting process identifies redundant overhead. We optimize for energy efficiency and compute cost without compromising the intelligence capabilities of the system.

System Verification Stages

The lifespan of a system within the DataTrionyx ecosystem follows a rigorous lifecycle from initial design to steady-state operations.

Blueprint Mapping

Defining the data paths and compute nodes for the intelligence baseline.

Component Isolation

Testing individual modular units for failure tolerance before integration.

Final Vetting

Full-system stress testing under simulated peak enterprise workloads.

Steady State

Continuous monitoring and automated optimization protocols.

Translating complexity into viable enterprise results.

DataTrionyx is more than just hardware and code. We are the architects of the systems that power modern business decisions. Our infrastructure standards ensure that when you need intelligence, the path is clear, verified, and ready.

Technical Architecture Team

Standards & Compliance Division

Standard Verification FAQ

Start Your Infrastructure Audit

Identify the performance gaps in your current data backbone. Our team is ready to apply DataTrionyx standards to your environment.